Carnegie Mellon University

Pittsburgh, PA

August 2023 - November 2024

Python, Computer Vision, ROS

Linux, System Integration

Introduction and Objective

E-waste is the fastest-growing waste stream globally, with significant environmental and economic implications.

This project aims to develop an efficient and generalizable disassembly pipeline for smart devices to increase e-waste processing rates

and improve material recovery. The focus is on automating and enhancing the separation of components from devices like phones, tablets,

and smartwatches, contributing to sustainable e-waste recycling practices.

The scope of the project involved the entire dissasembly pipeline from the initial reception of an end-of-life device, through the device's

classification and dissasembly, to the final sorting of liberated components on a conveyor belt. I worked with a team of researchers to develop

a robust pipeline, and I was primarily in charge of the development and integration of the sorting system.

Methods and Technical Details

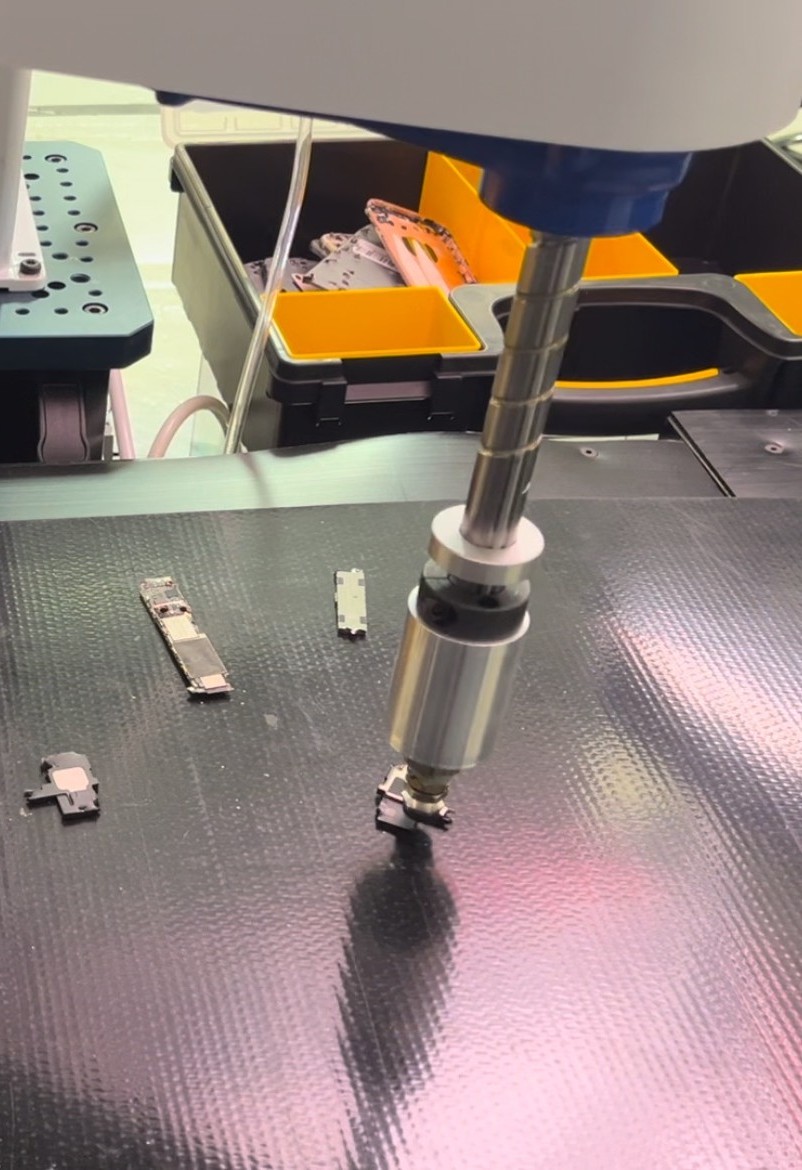

I developed the sortation station’s detection and sorting systems, integrating machine learning, computer vision, and robotics

to automate the disassembly pipeline. I transitioned the component detection system from a U-Net-based segmentation model to YOLOv8

for real-time instance segmentation, improving accuracy and speed in identifying components once liberated from devices. This system was

integrated with a SCARA robot, which used a controlled suction mechanism to sort components onto a conveyor.

I designed and implemented a custom ROS program to synchronize the detection system, robotic arm, and conveyor belt, ensuring seamless

coordination. I also led the selection of the camera model and system layout to optimize visual capture of components.

Additionally, I created an annotation tool leveraging Meta’s SAM to quickly generate segmentation masks for training, then collected and

labeled data for training and compared the performance of multiple object detection models, including YOLO and Mask-RCNN.

Results and Evaluation

While no specific metrics are available, initial tests showed that the system performed reliably in component identification and sorting across a variety of devices. The integration of YOLOv8 improved the real-time instance segmentation process, making it faster and more accurate in recognizing individual components. However, challenges remained with component gripping, particularly when components were deformed or had complex shapes, which sometimes led to missed components.